Measuring method (named after Ernst Abbe) used to determine the focal length and the position of the principal planes of a lens singlet or a lens system (=objective) on the optical axis.

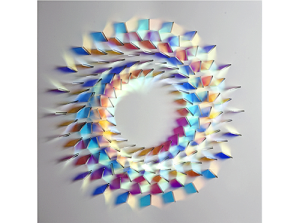

How to determine the focal length:

The position of the lens is fixed and the camera (or the screen ) is moved depending on the object position, that you get a focused image (in the image center). Different object positions result in different camera- or screen distances

![]()

How to determine the focal length of an objective (= (= lens system)):

The Position of a lens (and the lens singlets in it) are fixed and an arbitrary Point O on the optical axis is marked as reference point, for example the center of the lens or the center of the first lens element).

Now we measure the distance x from the reference point to the object, the distance x’ to the image and the image size B.

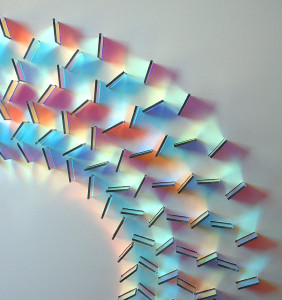

You get a list of Magnifications

![]() ,

,

and equations from refererence Point to object

![]()

and reference point to image:

![]()

Where h und h’ are the distances from object side resp. image side principal planw to the reference point.