What is the MTF of a sensor?

Ideal case:

For an ideal pixel the shape is square and the fillfactor (= percentage of the photons that were intended for that very pixel also reaches this pixel) is 100%.

The rectangle curve describing the square shape of the pixel is convolved with a Dirac comb curve, (__|__|__|__|__|….__|__| ) where the distance of the dirac impulses is one pixel.

After the pixel shape has been convolved with the Dirac curve we have to convolve an 2D array of such convolutions with a 2D rectangle curve that has the sensor shape.

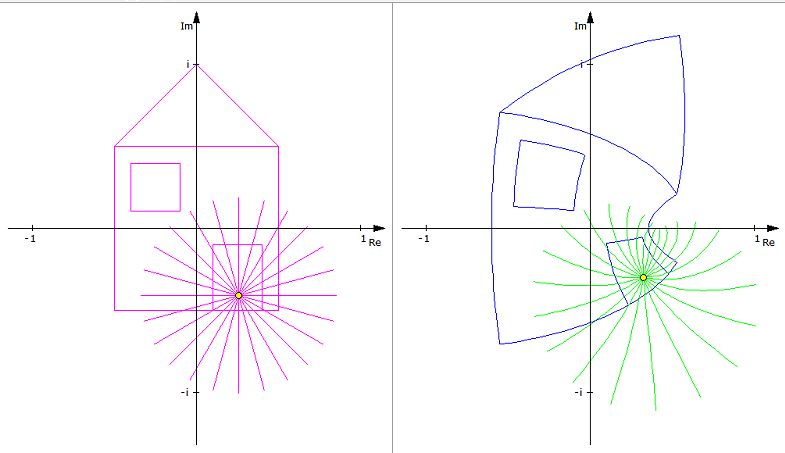

Fourier transformation as helper

When we change to the Fourier Domain, convolutions are mapped to simple multiplications.

The Fourier Transformed of a rectangle curve is sin(PI x) / (PI x) and the Fourier Transform of the Dirac comb is again a Dirac comb.

At 1 cycle per pixel the function sinc(x) is the first time zero. It is also zero for 2cycles per pixel, 3, 4, 5, etc ..

We are just interested in this curve in the range from x=Zero to One , where x is measured in cycles per millimeter.

Scaled accordingly the curve looks like this ..

Convolution with the Dirac function, we get a curve sampled at the point of the Dirac Pulses.

If we finally convolve this curve with the rectangle curve of the sensor, then instead of a mere sampling, we get a more “filled” curve .’

Nyquist:

The Nyquist frequency is at 1/2 cycle per pixel. For this value of x, the function has a value of 60% (=0.6).

When a sensor documentation says that the sensor MTF is at over 50%, it means that it is at 80% of what it possible by physics.

Trouble cause the function values for x beyond the Nyquist frequency, for x between 0.5 and 1.

These generate alias frequencies.

If in the combination of a lens and a sensor the lens is the optical low pass filter the function is very predictable and few alias frequencies occur.

If the pixels however have about the size of the sampling frequency of the Dirac Function, and therefore of the pixel size, Then die difference between the optical MTF and the sensor MTF generate the alias frequencies above. Frequencies are generated in the resulting image that can not be found on the object side.

For a non-perfect lens, instead of sync(x) often functions (abs(sinc(x))^n are used.

For these, the MTF at the Nyquist value is only 30% (=0.3).

In the Lensation setup for lens testing, the MTF curve is supersamples by factor 4. By this the sinc curve is stretched in x direction by factor 4 and the first time the curve is zero is not at 1 cycle per pixels, but at x0 = 4 cycles per pixel. The Niquist frequency stays in the center between zero and x0, say at 2 cycles per pixel. By stretching the curve this way, the value of the MTF function at Nyquist is as high as Factor 4 times more left before, say, at about 90%.

When we combine the lens and the sensor, then their MTZFs are multiplied.

Because the function value at at the (new) Nyquist frequency is about 90% , only 10% of the MTF is lost due to the sensor.

So this is not an explanation why actual MTF curves of lenses are significantly lower than design curves … at least not by a factor of two.